|

(Ok apparently I never actually posted this and it's just been in my drafts whoops sorry)

So I actually had to redo this process like FOUR TIMES because I kept screwing something up somewhere. Basically the biggest issue that I had was trying to make sure the mouthbag wasn't popping out of my face because that happened basically every time I tried to do this right. Another issue that I had was that the model really didn't fit my body. I have pretty small hands and no matter how I tried to scale the base model. it always made it look like I had these massive hands with creepy long fingers. I ended up just rolling with it in the end because I didn't have the energy to do a whole other render just to fix my fingers, but if I had more experience with the software I would definitely go back and fix that first. Overall, this process was actually really funny and also really odd at the same time. It was almost better for me if I saw my model being all screwed up in humanly impossible ways because then it was another layer between my actual self and this thing that I've created of myself, but it is still really cool to be able to share this with people. Whenever I keep telling people that I 3D scanned my body they're always like "wow that's so cool did it take a really long time? Was is expensive? did you have to wear a special suit?" and then I tell them that we did it with an iPad and I can't tell if they're more or less impressed by that. But either way, it's still a really cool technology and I've really enjoyed working with this.

0 Comments

My project is a looping animation of a man stuck in an endless world. It’s about a minute long and shows a man getting more and more flustered as time goes on because he realizes that he’s caught in a loop. I made this using the sequencer tool in Unreal Engine and I downloaded all of my files from various websites that host free 3D models. I decided to use a low poly format mostly because I didn’t think that my avatar would fit well with the idea that I had and also because I am not a huge fan of my avatar in the first place. I think the model that I have is unprofessional and would have taken away from the actual idea that I had and what I wanted to accomplish. I also decided to use a model that didn’t have a face for two reasons: one: because it was really hard to find a model that I liked and that worked for my idea, and two: once I did find this model, I realized that it was perfect because I didn’t have to worry about animating facial expressions or making sure that the character was emoting properly, I only had to worry about the way the body was moving. In the end, this was a great choice and helped to have the audience focus on the body of the character rather than the face which would not have been able to move properly. I did run into quite a few issues when putting this together. It might be because I don’t know how to use the sequencer tool properly in Unreal Engine, but I had some trouble working with the cameras mostly. Since the sequence starts with the camera stationary and the character moving freely of the camera movement, I had to use multiple cameras and make sure that they were placed in the exact same spot so that switching between cameras was effortless. I originally only had one camera and only tracked the character for a short period of time, but for some reason, halfway through the production, the camera would zoom out really really far when it wasn’t tracking the character and was messing everything up, so I decided to introduce two new cameras, one at the beginning and one at the end to make sure that this wouldn’t happen, and then the camera in the middle would track the character the whole time. This seemed to solve my problem and I only had minimal issues with making sure the camera was in the right spot. Other than the camera issue, I also had a lot of trouble finding the right animations that I wanted to use. As great of a resource as Mixamo is, the animations they have are really pretty limiting. I found that they have a great library for fighting and dancing animations, but there weren’t a lot of options for smaller body movements or emotions. The main one that I was looking for was one where the character would hold his head as if he had hit it, but all i could find was actual animations of the character reacting to getting punched, there was no good idle one for me to use. On top of that, a lot of the animations that I did find were very extravagant and over the top instead of being as subtle as I wanted them to be. While I ended up dealing with this and just making a character that was extremely frightened of his situation, I did want to make it a little more of a subtle transition between realizing that he was stuck in a loop and then panicking about it. I also once again had an issue with exporting the final sequence with sound and could not find a way to export it with sound which was extremely frustrating and I ended up giving up and just having it without the sound because it was becoming ridiculous. I think I tried exporting it at least 5 times to get the sound and it never worked. Finally, the last issue that I ran into was the actual tracking of the character in space. I originally had all the animations set to not be in place so the character would actually move through the animation. What I expected to happen was that I would set the beginning point of the character and then extend the animation to the distance that I wanted him to walk, and then the spot where he stopped walking would become his endpoint, however, this wasn’t the case. After the character finished his animation, he would jump back to his original location and then the next animation would start from the same beginning point. I tried all that I could with these animations to get them to work including tracking their movement on top of the walking animation, but every time there would be some sort of skip or jump back to the beginning of the animation and the character wouldn’t end up where I wanted him. So to fix this, I went back to Mixamo and redownloaded all of the animations I had but kept them in place. I then brought them into Unreal Engine and simply looped the animation while tracking the character across the set. This ended up being difficult too because I had to match his footsteps to his actual speed and adjust the ending position and the time it took him to get there depending on his speed and which animation I was using. In the end, I am satisfied with how this worked out, but I have a feeling that I missed a really simple fix to this issue, because I’m sure other people have to deal with this too. I also had some issues with the final transition animation where the character goes from running to bumping into the tree and falling over. Since the running animation was really fast and the falling animation happened while the character was in a static position, when I tried to transition between these two, the character started running slower into the tree and then would dramatically bump into it and fall over. This is not what I wanted to happen but I tried to separate them more in order to have a fast fall and everything I tried seemed to have some sort of issue with the timing and the location of where the character hits the tree. In the end, I wish that I could have found a better fix for this, but I am glad that I successfully created a meaningful looping animation in Unreal Engine. I chose to do this project because I am always fascinated with loops and having the audience learn something new by watching the whole thing a second or third time, so I tried to accomplish that here. I will say that using Unreal Engine to try to make something cinematic was pretty difficult though. While adding the elements to the scene and setting everything up worked really well, the actual mechanics of putting the cameras and animations in was not up to par with what I expected to be working with for this project. For some reason, I found working in 3D space to be almost more limiting than working in 2D space because I had to make sure everything was properly aligned and working together all at the same time instead of just focusing on what the camera was seeing at that very instant and moving onto the next frame or the next sequence from there. I think I would do everything about this project differently if I had the chance. While I am satisfied with the product that came out of this project, I really felt like I was working with a software that I was completely unfamiliar with and had to google basically everything on how to get things to work or why certain things weren’t working. If I could do this project again I would start with watching more tutorials and maybe doing a practice animation that had nothing to do with my project before getting started on the actual one. An issue that I ran into was that I started on my actual project right as I sat down at the computer and then once I realized that some things weren’t working the way I wanted them to, it was almost too late to start the whole thing from scratch since I had already invested so much time into the project. If I had started with something else just as a test run, I have a feeling I wouldn’t have run into this issue. Overall, I am really glad that I got to have my first taste of something like a 3D animation because it gave me the chance to really see what goes into something like a full-length animated film that I never really considered. And I just did mine in Low poly with 2 camera cuts. I can’t even imagine having to deal with hair or clothing or facial emotions or basically anything more advanced than what I did here (and I even REALLY struggled with this much). I do hope to continue using Unreal and getting to know the software better so that I can eventually come back and fix this animation to make it exactly perfect the way I want it. Also! Thanks for a great class! It was really interesting to be able to talk about so much hypothetical stuff and really dig into things that I never would have considered to even be topics that we could talk about or discuss. I really enjoyed the last 7 weeks and I learned so much too! Ok it actually took me so long to figure out how to export this file which was so stupid. I had the .avi file from Unreal Engine, but for some reason it exported without the sound so I thought I would just bring it into iMovie and be able to read the sound from there, but iMovie wouldn’t take the .avi so I converted it to a .mp4 and it still wouldn’t take it. I got so frustrated trying to add sound to this stupid video that I ended up just playing it on my computer and recording it with my phone and then adding sound to that file which is why it looks a little blurry and has lower contrast. ¯\_(ツ)_/¯ The actual process of making the video in Unreal Engine was no trouble for me.It was really tedious to add all the different models since i had over 200 in the end with all the crowds I created, but I started to get the hang of the rhythm of adding the model and then adding the animation over the top of it, so it only took me a few minutes to import all of the models to the sequencer. This is the funniest and most ridiculous project I have ever handed in for a grade but I had so much fun doing it and it’s so ridiculous but I love it.I was literally trying not to laugh out loud as I worked on this in the micro lab because it was so funny to me that I was creating something this silly, but in the end I am really proud of what I came up with. This assignment was actually much easier than I thought it was going to me. I’m not familiar with object programming where you connect the nodes, so I thought this was going to be much more complicated than it turned out to be. Granted, I still would not have been able to come close to completing this assignment without the video tutorial, but I think I have a better understanding of how everything works now. I decided to use the Macarena dance from Mixamo because it looked hilarious and made the arms of my model twist in weird ways. I think that’s just because I didn’t rig it properly, but it was still funny. It also ended up dragging my armpits into little underarm wings which I also thought was hilarious. When I first loaded the model into unreal and put the texture on it, I realized that the eyes were all messed up and out of place (also hilarious, kind of terrifying too) and I was debating between fixing it in Photoshop or just leaving it the way it was. This was that weird feeling coming back where I wasn’t sure whether I wanted the model to represent me to the closest degree, or if I should try to distance myself from the model and its personality as much as I could. I ended up leaving it the way it was, mainly because I didn’t want to spend the time fixing it in Photoshop, but maybe I’ll make another skin eventually, who knows. Once I had all the basic things from the video tutorial done like getting the blueprint and the blendspace set up, I decided to mess around with the settings and code a little bit. What I really wanted to happen was that I would press a key and a hundred models would all drop into the scene in formation and start doing the Macarena dance with the Macarena playing in the background. I couldn’t figure out how to do this without setting each individual spawn point, so I decided not to do it, and I also couldn’t find an easy way to add music to the scene on command, so I decided to just create an army of models all dancing in formation instead so as soon as you run the game you’re just faced with over 100 models all dancing at you. I love it. I really do want to keep developing this further into something that I can actually use. I tried using Unreal engine before I took this class, but my computer didn’t agree with it so I gave up, but I had this whole idea set up as a gift to my brother when he left for college. I was going to create a 3D model of my childhood home and then in the basement where he always plays video games in his little man cave was going to be this portal that throws you into the actual gameplay. I even got as far as creating a full model of my house in Blender, so maybe now that I have this model of myself and the means necessary to actual put all the elements together, I might move forward with that idea.

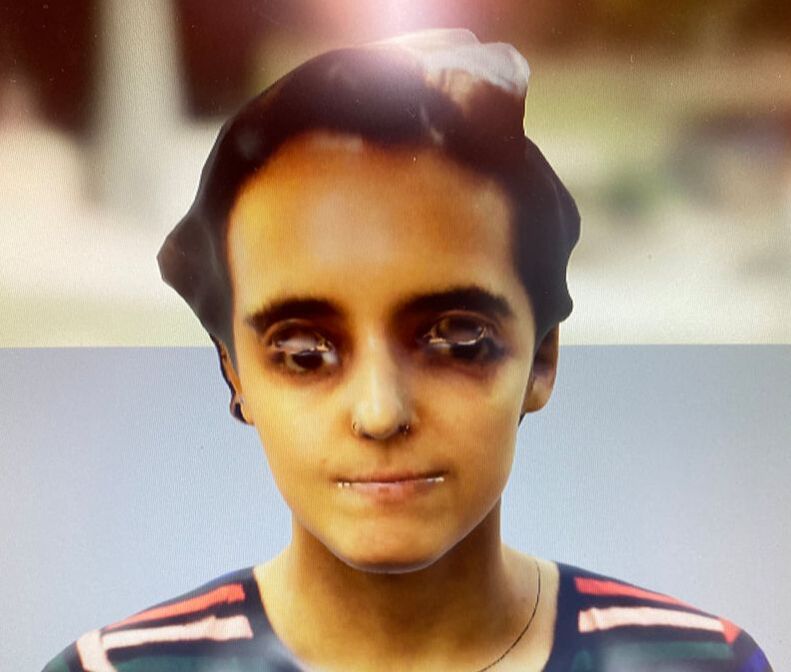

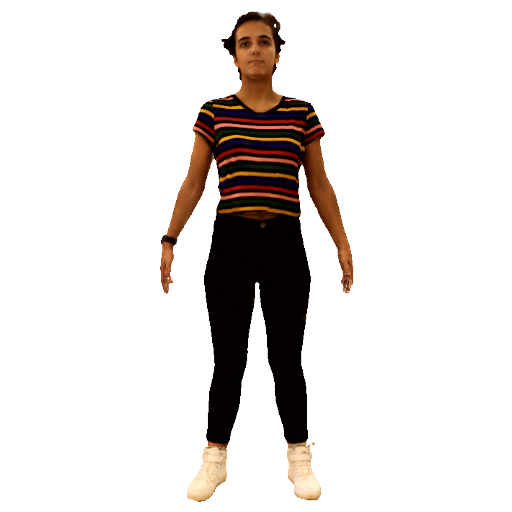

Alrighty so here’s my 3D scan of myself.

The first thing I have to say about this is that it is SO weird to see yourself in a 3D space. I’ve never seen myself from the back in 3D and it’s just really weird that it’s my body shape and the texture is of me, but it still doesn’t really feel like me. Super odd feeling. (Also, I have to say that seeing the unwrapped texture map of myself was simultaneously hilarious and completely horrifying to see.) Anyway, this took a few tries to get done. The biggest issue I had when getting scanned is that my hands move a lot very often. I’m a twitchy person. So having to stand really still for that period of time was kind of difficult. The other issue I had was not when I was being scanned, but when I was scanning my partner. I kept having the issue of the model spinning out halfway through the scan which got pretty frustrating after the third attempt. I found out that the reason that it was happening was most likely because I was tilting the iPad down in order to get the top of the head and the arms, but this seemed to confuse the software because it couldn’t handle that rotation on the axis and responded by freaking out and spinning the model. After I figured this out, I worked really hard to keep the iPad steady and we ended up getting some really good scans. For my scan, we did 4 of them, just to be able to compare, and I ended up going with the first one we did. The only issues that I noticed with the scan, specifically the texture, is that there are some holes. I think it was really hard to get the top of my head in the scan so there’s a nice big white space up there, and there are also some weird texture issues in the legs and armpit areas, but I’m thinking that I’ll be able to fix the texture with some photoshop skills and then use the fixed texture and map that over the model. The only concern I have about that right now is I’m not sure the link between the fixed texture and the model will stay in tact, so I have to make sure I do that properly. Other than the weirdness of seeing myself as a 3D model on an iPad, I think the software and hardware did a good job of capturing the essence of myself. I can’t say that I “relate” to the model, because I think I still have that separation in my mind of understanding that it’s just a model of myself in one position, but I have a strong feeling that once we rig the model and get it moving that this will change. i think movement is an important part of who people are and adding that movement to a static rendering of myself may break down that separation between myself and the model of myself. I’ve also found myself referring to the model using the pronouns she and her, which is also kind of odd because it is me, I just can’t seem to wrap my head around comparing a rendered model of myself with my actual self. I think this is different from pictures or videos, in which we can actually say “that’s me” because we’re able to see the way we move and the way we sound and we can also relate ourselves to the space in which the photo or video was taken. Seeing a model of myself just floating in space and being able to manipulate the position of it is really odd and I feel like it could also seem kind of intrusive at times. That model of myself will be on that iPad so long as the app is loaded on it, and anyone who uses that device will be able to tap on my model and maneuver it around however they like. Even as I was scrolling through the archives of the models, it seemed intrusive and weird to be looking at a scan of someone’s body without them being there or being aware of it. Overall, I think that this technology is amazing and we can do so many cool things with it, but I’ve really been considering the negative implications of having such easy access to a program that can create a lifelike rendering of a real person. In relation to the readings, I thought the one about Rogue One and using Peter Chushing’s likeness without his consent (obviously because he’s dead) was an interesting take on the idea. Even with the technology that we used for this assignment, I was thinking about how someone can so easily create a lifelike rendering of someone and use that in any situation. With deep fakes now becoming a huge issue online, with people using celebrities’ likenesses to create videos and some even going as far as to create pornographic content using a celebrity’s face without their consent, I think we need to be really careful about how we use this technology and making sure that we’re being ethical with the applications we have for these kinds of renderings. I’m curious if anyone in the class has any other ideas about this as well. Additionally, the idea that people can relate so wholly to their avatars, for example, in a game like Second Life where they are playing as a version of themselves, still seems pretty foreign to me. Again, I’m still having a hard time seeing the 3D model of myself as a version of me, but for someone to be able to do that, you really need to have a lot of time with that avatar in order to break that separation of reality and “fantasy” (although I hesitate to call it fantasy on the grounds that people see avatars as an extension of reality). It brings up the question that if we were to be aware that we’re living in a simulation, would we do things differently? People “cheat” on significant others in an online game like Second Life, but does that really count if it’s not actually their physical body? Many would say yes, since it’s still the real life person making those decisions. And while it may seem like those virtual choices and actions may have no consequence, is it possible that those virtual decisions are an extension of our real life personalities and character traits? If we were to live in a world with no consequences like these virtual worlds simulate, would we make radically different choices with the knowledge that nothing can “go wrong” or truly affect us negatively? I personally have hope in the goodness of the human race and would hope that many people would still stay true to themselves, but the realist in me knows that with the way that so many people act online and in virtual spaces that my optimism may be wrongfully placed in many people. This experience of creating a 3D model of myself that will most likely live online longer than I will live on this earth is something that has really gotten me thinking about how we view ourselves in this physical world as well as online and how similar those different facets of our life actually are. |